Announcing the Promethean Collective Curriculum

A comprehensive approach for Civil Society Organizations to build their confidence,clarity and courage for an AI age.

My late uncle Steve was a Jesuit priest, a committed teacher & educator, and a lifelong student of rhetoric—especially homiletics. One of his core beliefs, which I heard him repeat countless times and apply to nearly every form of human endeavor, was a statement so simple it bordered on nonsensical: the main thing is to know what the main thing is, and to keep the main thing the main thing.

It is the kind of line that sounds obvious until suddenly it isn’t—until the world becomes noisy, fast-moving, and distracting enough that clarity begins to feel like a luxury. And then a sentence that once seemed almost playful reveals itself as bllaast.

For The Promethean Collective, the main thing is ensuring that Civil Society organizations—from every country, every culture, and every part of the political spectrum—have the knowledge, the frameworks, the context, and the networks to confidently and competently apply their own values and expertise in public discourse on AI. We do this because we believe it is a necessary condition for AI—or any emerging technology—to serve the common good.

For Civil Society itself, the main thing is even more elemental: giving institutional form to the lived experience of their communities. Buffer. Beacon. Translator. Whatever the moment requires, as we all move—slowly—into an uncertain future.

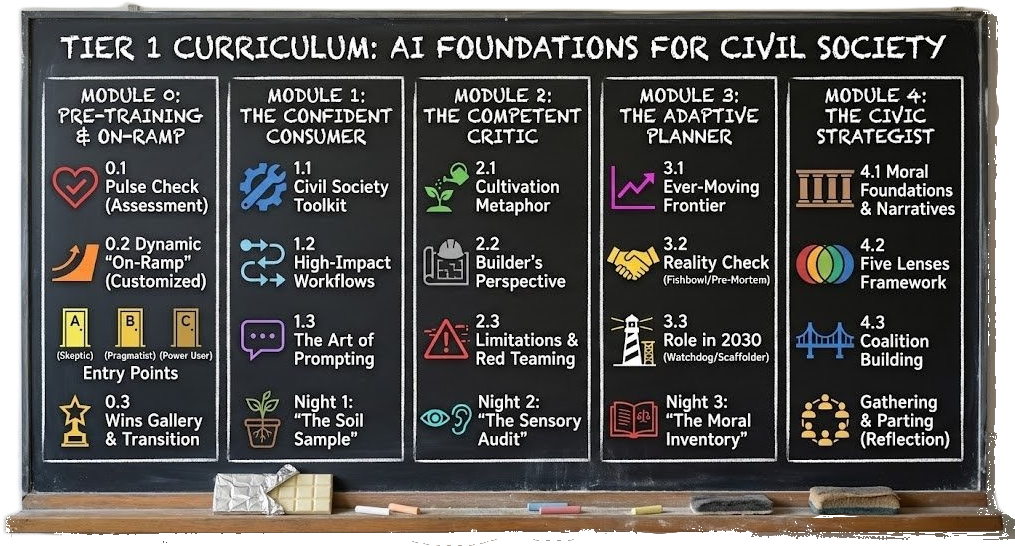

Today, we are announcing the Promethean Collective’s three-tier curriculum, built to strengthen that capacity across roles, regions, and responsibilities.

Is This Just Another AI Literacy Program?

No, but we understand why you ask. The past two years have produced an avalanche of “AI for nonprofits” trainings, most of them focused on operational efficiency: how to write better fundraising emails, how to automate your social media, how to cut administrative costs. These aren’t bad things. But they’re not the main thing.

Civil Society’s role in democratic life isn’t to become more efficient at executing its current playbook. It’s to sense when the playbook itself needs rewriting—when systems stop serving the people they’re meant to serve, when new forms of harm emerge from complexity rather than malice, when the gap between official narratives and lived experience becomes too wide to ignore.

This curriculum exists to help organizations hold onto that role—strategically, collaboratively, and resiliently—no matter how quickly AI evolves or how dramatically the social fabric shifts.

The Four Modules

Tier 1, AI Foundations for Civil Society, is built around four modules that take participants on a deliberate journey—from building practical confidence, through grounding technical skills in organizational values, to developing strategic judgment for coalitions and community meaning-making.

Module 1: The Confident Consumer

We begin here because this technology is already being used—by your funders, your partners, the governments and platforms your communities depend on. The conversation is happening. The decisions are being made. And if Civil Society can’t engage competently, it won’t just miss out on efficiency gains. It will lose its seat at tables where choices are being made about the communities it serves.

This module builds practical fluency with AI tools—the kind that lets you experiment, test boundaries, and develop an embodied sense of what these systems actually do. You’ll map your daily bottlenecks to specific tools, move from one-off “chats” with AI to integrated workflows, and develop prompting skills that treat AI as delegation rather than magic.

The goal isn’t organizational efficiency for its own sake. Every hour you reclaim from drudgery is an hour you can redirect to work that requires human judgment, relational depth, and community presence.

Module 2: The Competent Critic

AI systems don’t announce their failures. They produce confident outputs whether they’re right or wrong. If you can’t identify where these systems break—where they hallucinate, oversimplify, or reproduce embedded biases—you can’t protect the communities you serve.

This module builds critical evaluation capacity. You’ll learn how AI products are built, what trade-offs their creators face, and why even well-intentioned systems produce problematic outputs. You’ll practice “red-teaming”—intentionally probing for weaknesses—and develop the skeptical eye Civil Society needs to hold AI systems accountable.

The key insight: you can critique things you don’t understand, but there’s a difference between critique that deepens understanding and critique that’s just noise. This module builds the former.

Module 3: The Adaptive Planner

Once you can actually use these tools, the urgent question becomes: should you? And how? Technical competence without values clarity is dangerous precisely because it’s so seductive. The tools work. They save time. But “it works” isn’t a sufficient decision-making framework for organizations whose entire purpose is serving communities with integrity.

This module asks participants to examine their organization’s moral foundations—not the mission statement on your website, but the actual lived values that guide daily choices. You’ll identify the “ever-moving frontier” between what should be automated and what must remain human. You’ll learn to navigate different moral vocabularies across communities.

And you’ll grapple with temporal complexity: AI systems update rapidly, sometimes every few weeks. Community trust builds slowly. Wisdom accumulates over years. Civil Society’s comparative advantage is the ability to hold multiple tempos simultaneously—responding quickly to emergencies while building trust over decades.

Module 4: The Civic Strategist

This is where everything comes together. Where tool-knowledge and values-knowledge merge into judgment. Where you learn to navigate the messy middle—knowing when to use AI, when to resist it, when to demand transparency, when to build coalitions with others facing similar choices.

Participants learn to use the Five Lenses Framework (introduced in our essay “This boy is Ignorance. This girl is Want” and developed in “Humanizing the Machine”) to analyze AI systems from multiple perspectives: protection from harm, redistribution of gains, voice and power in decision-making, public provisioning versus private control, and long-term systems thinking.

You’ll learn to build coalitions across moral and cultural divides—finding common ground with organizations that share your concerns but frame them differently. An environmental organization and a religious liberty organization might both oppose facial recognition surveillance, but for completely different reasons. Your job is to build the coalition without erasing those differences.

And you’ll learn to become what we call “meaning scaffolders”—people who can help their communities make sense of complex, opaque sociotechnical systems. Not building the meaning for them, but providing temporary support structures that enable communities to construct their own interpretations of technological change. The scaffolding comes down when capacity exists; what remains is something the community built for itself.

Building Capacity That Stays

Here’s something we’ve learned: there are no Civil Society

AI experts yet. Just people with more practice.~

The technology is too new, and evolving too rapidly, for anyone to claim mastery. What matters isn’t importing external expertise—it’s developing internal capacity that can adapt as the technology changes and as your organization’s needs evolve. And there is early evidence organziations that start early enjoy compounding benefits, if their cultures can learn from early failures rather than being hamstrung by them and, crucially, if they reinvest the staff time and attention freed up by their successes.

This is why we’re building a Champions Network into our curriculum design. The most valuable learning happens when people in different roles across an organization work together to translate general lessons to their—your—specific context,, and then help colleagues see and seize the benefits.

The prompts that work best for your organization won’t come from a generic library. The failure modes you discover in your context are valuable knowledge. When these insights get shared across your organization—when people who actually understand your mission, your constraints, your communities start building contextual knowledge together—that’s when real capacity gets built. Not by hiring consultants who parachute in with generic best practices, but by growing expertise from the inside that actually fits how you work.

An Invitation

This is the beginning of a long project—decades long, perhaps. A generational investment in the strength and wisdom of Civil Society. We are not simply launching a training program. We are building a shared civic language, a global network, and a community of practice capable of navigating an AI-shaped world with integrity.

If you belong to an organization that listens carefully, that builds trust, that carries the stories of communities others overlook, then this curriculum is for you. If you are trying to understand how to lead through uncertainty without losing your center of gravity, this curriculum is for you.

Civil Society doesn’t need to match the speed of technology. It needs to keep its main thing at the center of its work: the people it serves.

Click here to register interest in joining a pilot training, or to provide feedback.

Learn more about The Tier 1 Curriculum | The Educate Pillar | Our Programs

Click here to register interest in joining a pilot

training, or to provide feedback.